OpenAI on Friday revealed that it banned a set of accounts that used its ChatGPT device to develop a suspected synthetic intelligence (AI)-powered surveillance device.

The social media listening device is alleged to probably originate from China and is powered by one among Meta’s Llama models, with the accounts in query utilizing the AI firm’s fashions to generate detailed descriptions and analyze paperwork for an equipment able to gathering real-time knowledge and experiences about anti-China protests within the West and sharing the insights with Chinese language authorities.

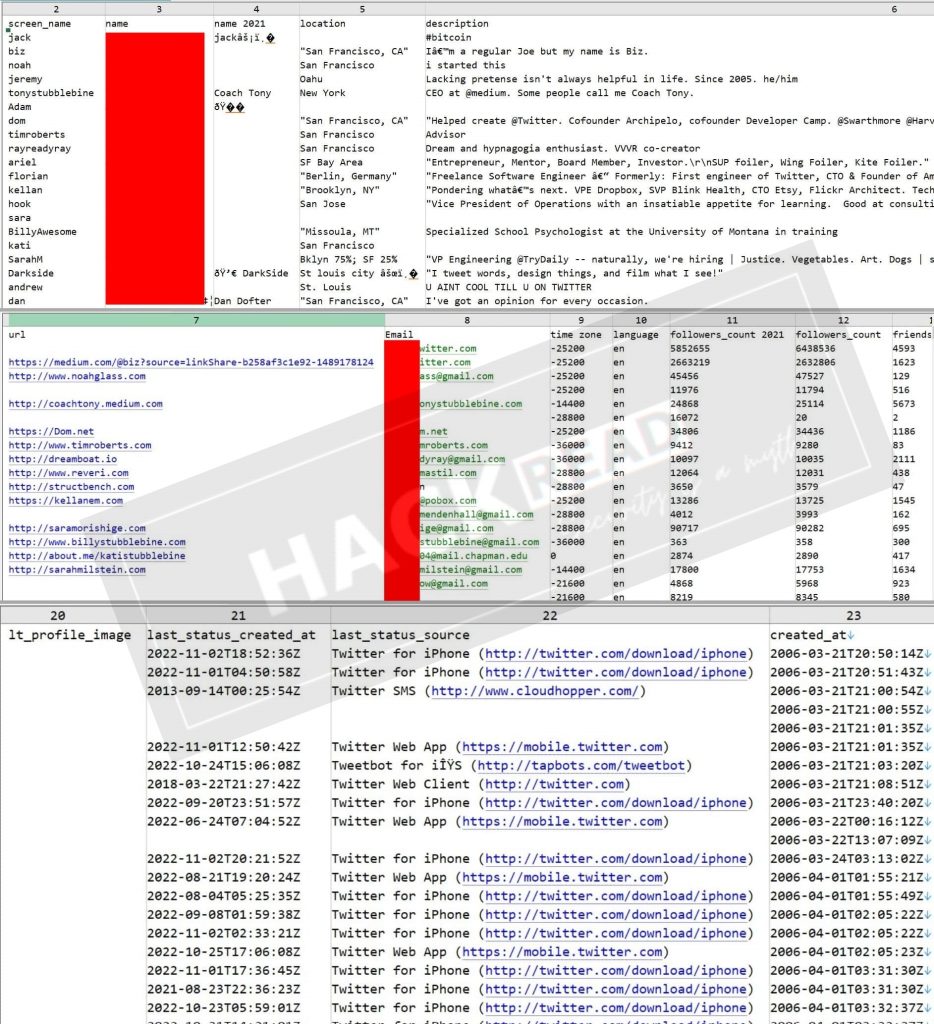

The marketing campaign has been codenamed Peer Overview owing to the “community’s conduct in selling and reviewing surveillance tooling,” researchers Ben Nimmo, Albert Zhang, Matthew Richard, and Nathaniel Hartley famous, including the device is designed to ingest and analyze posts and feedback from platforms equivalent to X, Fb, YouTube, Instagram, Telegram, and Reddit.

In a single occasion flagged by the corporate, the actors used ChatGPT to debug and modify supply code that is believed to run the monitoring software program, known as “Qianyue Abroad Public Opinion AI Assistant.”

Apart from utilizing its mannequin as a analysis device to floor publicly obtainable details about suppose tanks in the US, and authorities officers and politicians in nations like Australia, Cambodia and the US, the cluster has additionally been discovered to leverage ChatGPT entry to learn, translate and analyze screenshots of English-language paperwork.

A few of the photos have been bulletins of Uyghur rights protests in varied Western cities, and have been probably copied from social media. It is at the moment not identified if these photos have been genuine.

OpenAI additionally stated it disrupted a number of different clusters that have been discovered abusing ChatGPT for varied malicious actions –

- Misleading Employment Scheme – A community from North Korea linked to the fraudulent IT worker scheme that was concerned within the creation of private documentation for fictitious job candidates, equivalent to resumés, on-line job profiles and canopy letters, in addition to come up convincing responses to elucidate uncommon behaviors like avoiding video calls, accessing company techniques from unauthorized nations or working irregular hours. A few of the bogus job purposes have been then shared on LinkedIn.

- Sponsored Discontent – A community probably of Chinese language origin that was concerned within the creation of social media content material in English and long-form articles in Spanish that have been vital of the US, and subsequently revealed by Latin American information web sites in Peru, Mexico, and Ecuador. A few of the exercise overlaps with a identified exercise cluster dubbed Spamouflage.

- Romance-baiting Rip-off – A community of accounts that was concerned within the translation and technology of feedback in Japanese, Chinese language, and English for posting on social media platforms together with Fb, X and Instagram in reference to suspected Cambodia-origin romance and investment scams.

- Iranian Affect Nexus – A community of 5 accounts that was concerned within the technology of X posts and articles that have been pro-Palestinian, pro-Hamas, and pro-Iran, and anti-Israel and anti-U.S., and shared on web sites related to an Iranian affect operations tracked because the Worldwide Union of Digital Media (IUVM) and Storm-2035. One among the many banned accounts was used to create content material for each the operations, indicative of a “beforehand unreported relationship.”

- Kimsuky and BlueNoroff – A community of accounts operated by North Korean risk actors that was involved in gathering data associated to cyber intrusion instruments and cryptocurrency-related topics, and debugging code for Distant Desktop Protocol (RDP) brute-force assaults

- Youth Initiative Covert Affect Operation – A community of accounts that was concerned within the creation of English-language articles for an internet site named “Empowering Ghana” and social media feedback focusing on the Ghana presidential election

- Activity Rip-off – A community of accounts probably originating from Cambodia that was concerned within the translation of feedback between Urdu and English as a part of a scam that lures unsuspecting individuals into jobs performing easy duties (e.g., liking movies or writing critiques) in alternate for incomes a non-existent fee, accessing which requires victims to half with their very own cash.

The event comes as AI instruments are being more and more utilized by dangerous actors to facilitate cyber-enabled disinformation campaigns and different malicious operations.

Final month, Google Menace Intelligence Group (GTIG) revealed that over 57 distinct risk actors with ties to China, Iran, North Korea, and Russia used its Gemini AI chatbot to enhance a number of phases of the assault cycle and conduct analysis into topical occasions, or carry out content material creation, translation, and localization.

“The distinctive insights that AI corporations can glean from risk actors are significantly priceless if they’re shared with upstream suppliers, equivalent to internet hosting and software program builders, downstream distribution platforms, equivalent to social media corporations, and open-source researchers,” OpenAI stated.

“Equally, the insights that upstream and downstream suppliers and researchers have into risk actors open up new avenues of detection and enforcement for AI corporations.”