Human-to-human communication is of course multimodal, involving a mixture of spoken phrases, visible cues, and real-time changes. With the Multimodal Reside API for Gemini we have achieved this similar stage of naturalness in human-computer interplay. Think about AI conversations that really feel extra interactive, the place you need to use visible inputs and obtain context-aware options in real-time, seamlessly mixing textual content, audio, and video. The Multimodal Reside API for Gemini 2.0 permits one of these interplay and is offered in Google AI Studio and Gemini API. This know-how permits you to construct functions that reply to the world because it occurs, leveraging real-time knowledge.

The way it works

The Multimodal Reside API is a stateful API using WebSockets to facilitate low-latency, server-to-server communication. This API helps instruments similar to perform calling, code execution, search grounding, and the mix of a number of instruments inside a single request, enabling complete responses with out the necessity for a number of prompts. This permits builders to create extra environment friendly and complicated AI interactions.

Key options of the Multimodal Reside API embrace:

- Bidirectional streaming: Permits for concurrent sending and receiving of textual content, audio and video knowledge.

- Sub-second latency: Outputs the primary token in 600 milliseconds aligning response instances with human expectation for seamless response.

- Pure voice conversations: Helps human-like voice interactions, together with the power to interrupt and options like voice exercise detection, enabling extra fluid dialogue with AI.

- Video understanding: Gives the power to course of and perceive video enter, enabling the mannequin to mix each audio and video contexts for a extra knowledgeable and nuanced response. This contextual consciousness brings one other layer of richness to the interplay.

- Software integration: Facilitates the combination of a number of instruments inside a single API name, extending the API’s capabilities and permitting it to carry out actions on behalf of the consumer to unravel advanced duties.

- Steerable voices: Gives a collection of 5 distinct voices with a excessive stage of expressiveness, able to conveying a large spectrum of feelings. This permits for a extra personalised and fascinating consumer expertise.

Multimodal dwell streaming in Motion

The Multimodal Reside API permits a wide range of real-time, interactive functions. Listed here are just a few examples of use circumstances the place this API will be successfully utilized:

- Actual-Time Digital Assistants: Think about an assistant that observes your display and provides tailor-made recommendation in real-time, telling you the place to seek out what you’re in search of or executing actions or your behalf.

- Adaptive Academic Instruments: The API helps the event of academic functions that may adapt to a pupil’s studying tempo, for instance, a language studying app may regulate the problem of workout routines based mostly on a pupil’s real-time pronunciation and comprehension.

That will help you discover this new performance and kick begin your individual exploration we have created a bunch of demo functions showcasing realtime streaming capabilities:

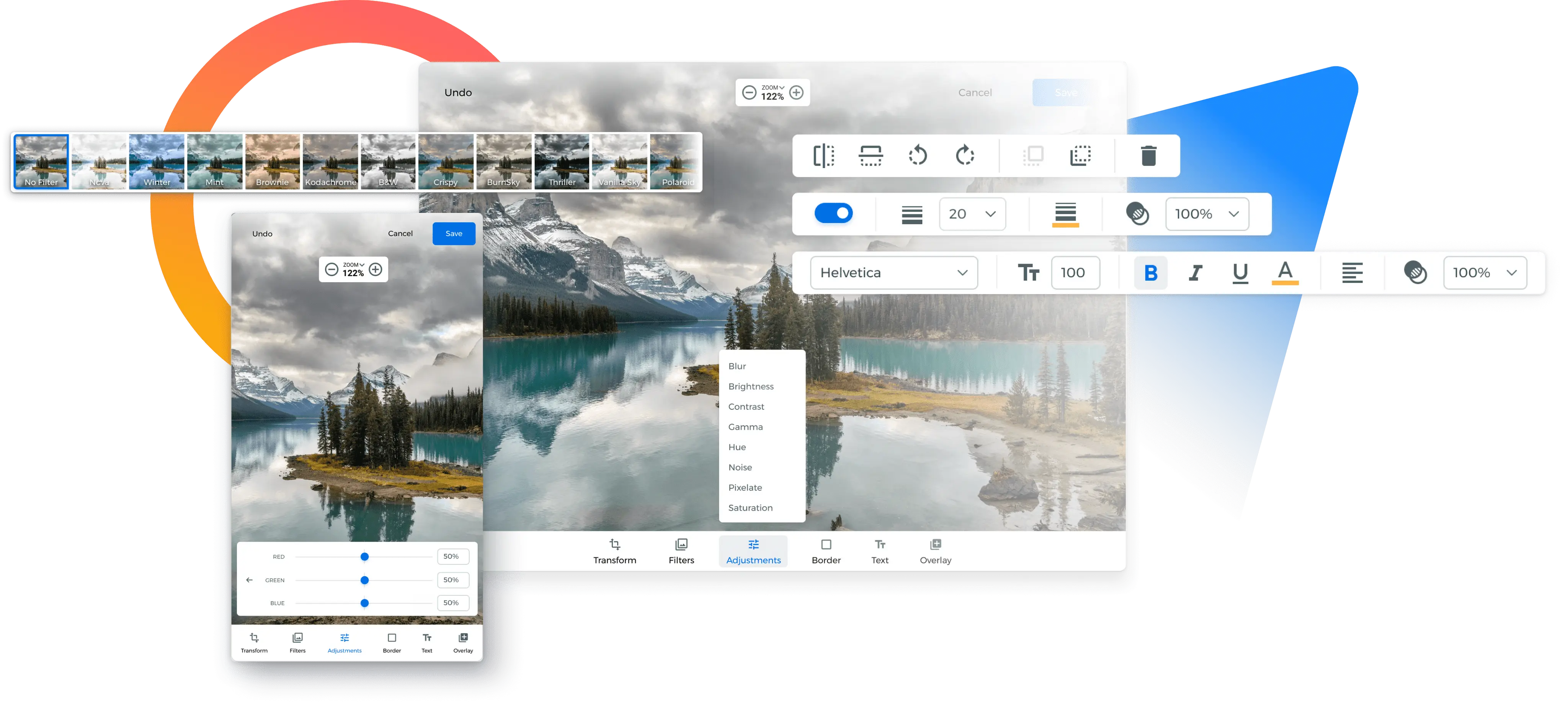

A starter net utility for streaming mic, digicam or display enter. An ideal base to your creativity:

Getting Began with the Multimodal Reside API

Able to dive in? Experiment with Multimodal Reside Streaming straight in Google AI Studio for a hands-on expertise. Or, for full management, seize the detailed documentation and code samples to begin constructing with the API at this time.

We have additionally partnered with Each day, to offer a seamless WebRTC SDK integration constructed with Pipecat, the open supply framework. Pipecat’s cross platform assist allows you to add real-time capabilities effortlessly to your net and native cellular apps. Daily, which gives SDKs and world infrastructure for extremely low latency voice, video, and AI, maintains Pipecat with contributions from the group. Try Each day’s integration guide to get began constructing.

We’re excited to see your creations – share your suggestions and the wonderful functions you construct with the brand new API!